Commencing Fundraising to Purchase our Building

We are in discussions with our landlord hashing out the details about selling the building (to us) at the

We are in discussions with our landlord hashing out the details about selling the building (to us) at the

6:30-8:30, hang out with some other Linux fans, talk about Linux, use Linux, listen to interesting talks, give talks,

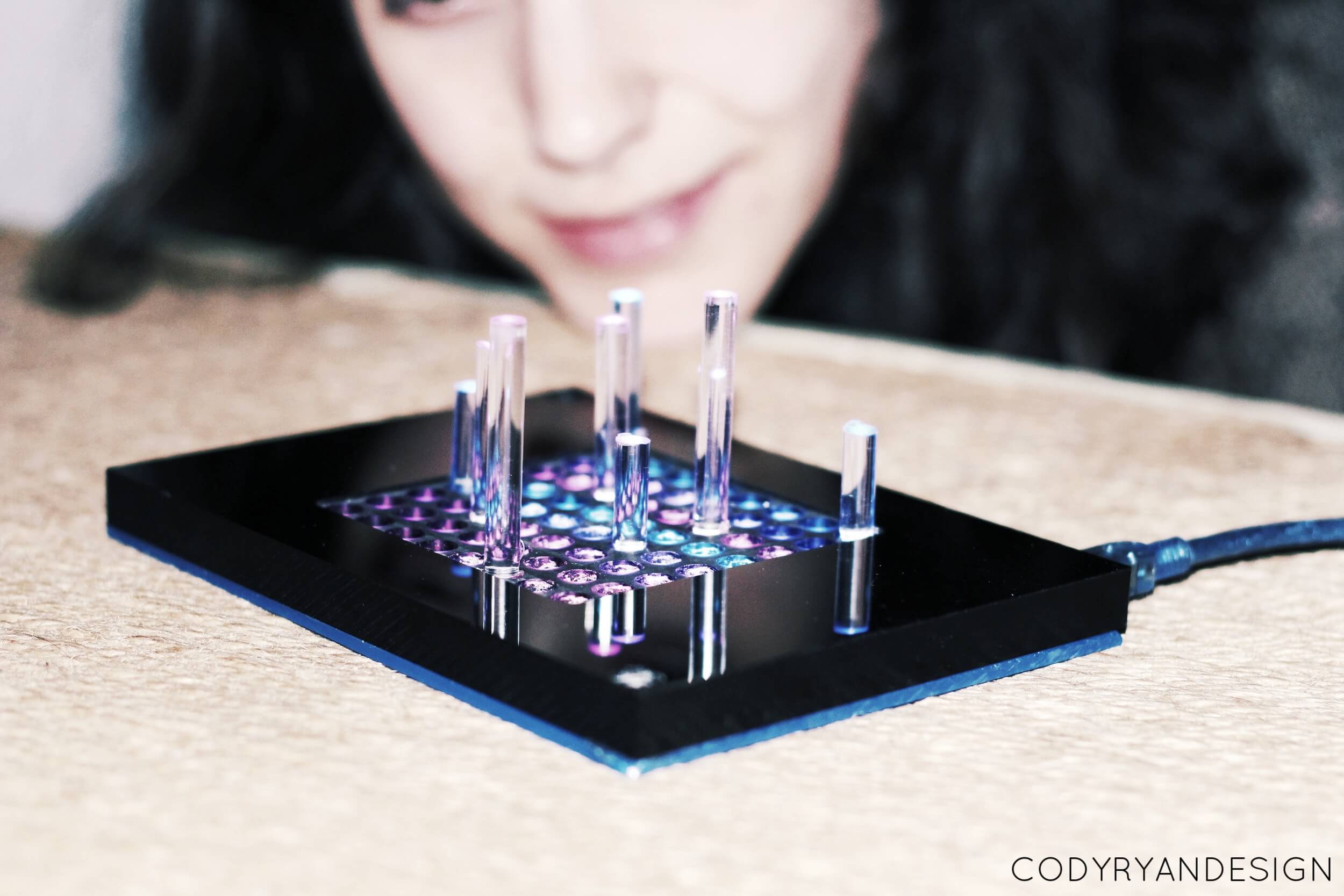

Mondays nights are a great time to hang out in Electronics. Alternating between NERP (not exclusively raspberry pi) and

Learn to make mead, cider, beer etc., bring beer, talk about beer, drink some beer. Chill beverage making club

Join us on Saturday, April 13th @ 7pm for a night of fun, food, and music to celebrate 15

Hey all- Since we are not currently offering the-more-the-merrier office hours for authorizations, I have created this sheet to

To our members, The Mayor of Chicago has issued a stay at home advisory for the City of

What? :: Members create original designs. Members vote on the winner. The winning design gets printed on things (think

Site Design by Kevin Huemann